One Year On: COVID-19 and Digital Rights

By Nani Jansen Reventlow, 7th April 2021

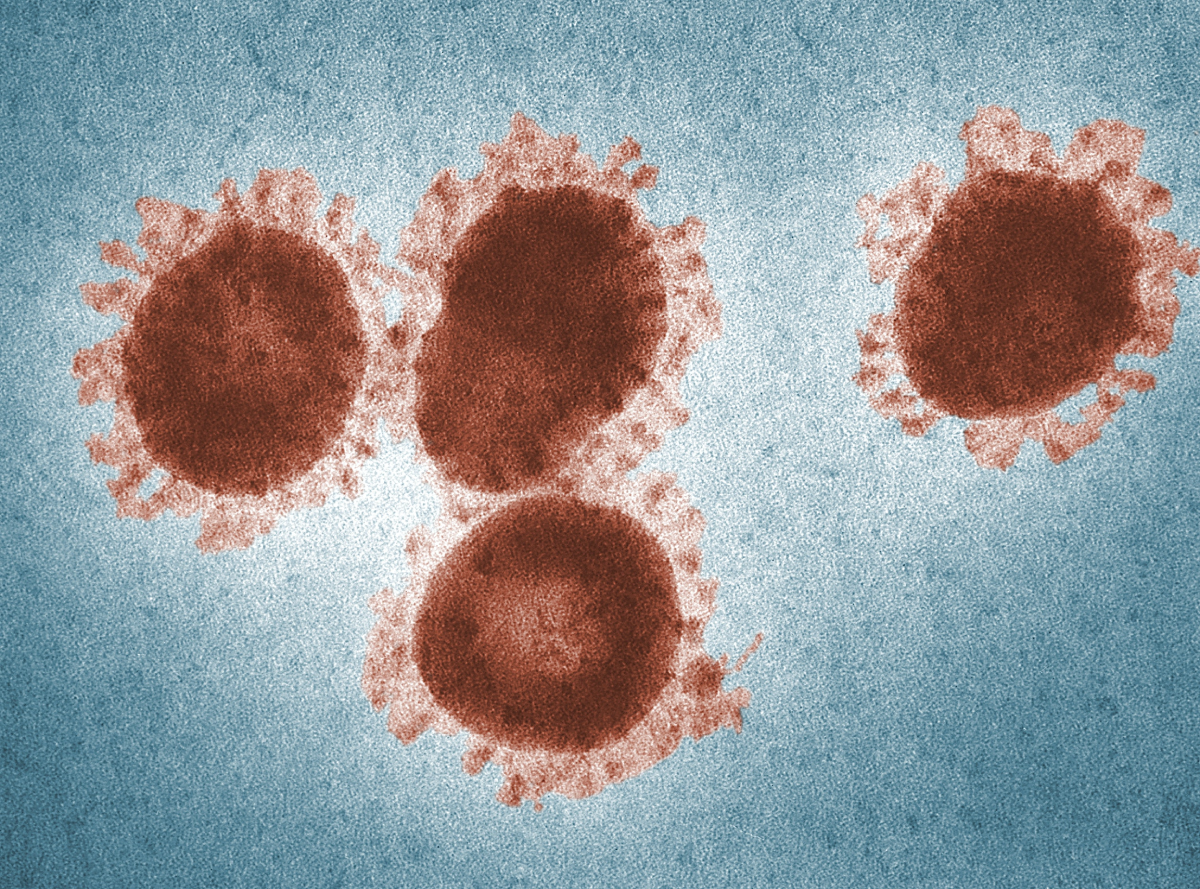

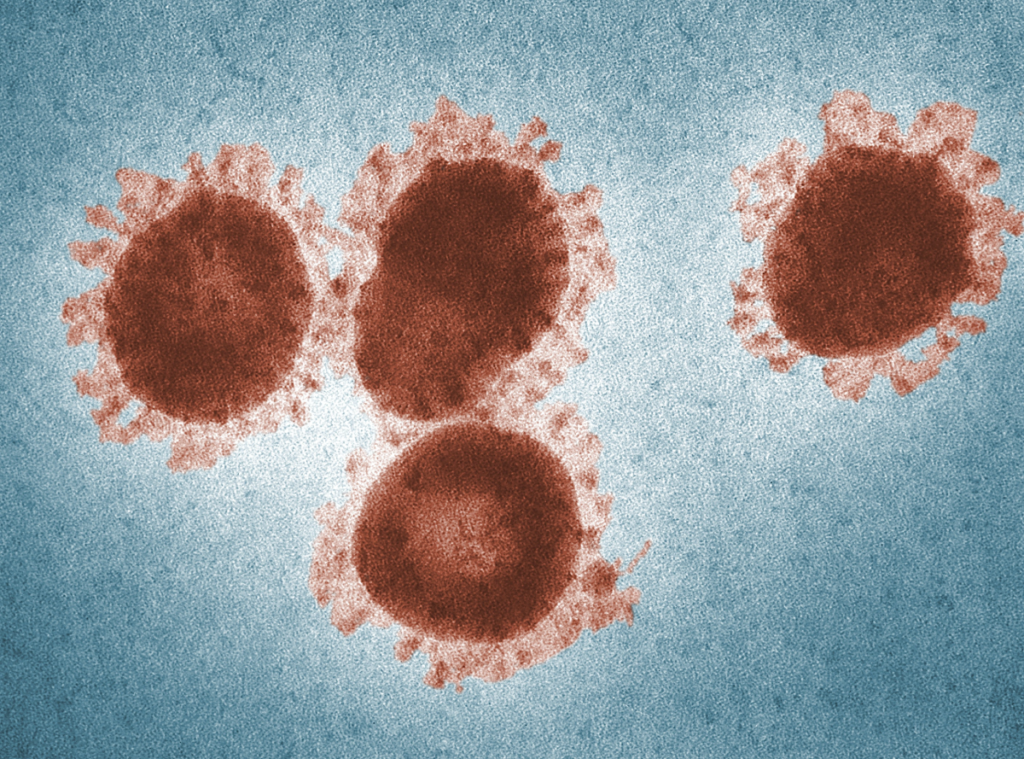

Last year, in the early days of the pandemic, we declared the COVID-19 pandemic a crisis for digital rights. Today on World Health Day, one year on, we’re asking: how has the situation evolved? Have our fears materialised, and has the coronavirus changed the digital rights landscape forever?

Last spring, digital rights challenges were spreading as quickly as the virus itself. Worrying trends, from “biosurveillance” measures to tracking apps, sprung up almost immediately, spurring digital rights activists into action.

Last spring, digital rights challenges were spreading as quickly as the virus itself

Back then, fears abounded about how intrusive surveillance measures or intensive data collection would proliferate in the coming months. One year on, not only have such measures become increasingly common: they’ve also become rapidly normalised across the globe.

Mounting cases

In DFF’s own COVID-19 litigation fund, launched as an emergency support against potential digital rights violations, the issues tackled by grantees ranged from worrying COVID-19 tracking apps to increased surveillance of university students.

In the UK, Big Brother Watch plans to take a claim to the High Court against thermal scanners, which are now deployed in many public places, from schools to shops. The organisation is hoping for acknowledgement that the data garnered from these scanners is personal data, and that impact assessments must take place before these machines are used.

In Germany, on the other hand, Gesellschaft für Freiheitsrechte is taking litigation against health insurance providers, who are transferring the pseudonymised health data of millions of people over to institutions for research purposes. GFF fear, however, that the security standards for people’s sensitive health data is too weak, and that certain unique data could later be re-personalised.

A global problem

The same concerning measures recur again and again across the globe.

With the worldwide vaccine rollout in full swing, many countries have begun to make use of “immunity passports”. Israel has put in place a “green pass” system allowing only those who are vaccinated or who have already recovered from COVID-19 to visit restaurants and attend events. China has launched a digital vaccination passport for those planning to cross borders, while in the UK, there are proposals to introduce vaccine certificates in order to gain entry to venues such as pubs.

Many airlines are also promising to make use of these passes to prevent travellers from spreading COVID-19, despite the fact that most of the world is unlikely to have access to the jab in 2021.

…these kinds of immunity passports give states huge powers to surveil, and the risk of mission creep is high.

But these kinds of immunity passports give states huge powers to surveil, and the risk of mission creep – the possibility of the collected data being repurposed in other contexts – is high.

The concept of such passports is inherently discriminatory, creating as it does yet another system through which to exclude certain individuals. As always, this is likely to include already-marginalised groups who don’t have access to vaccines.

The all-seeing eye

Surveillance, in forms both old and new, has been deployed extensively throughout the pandemic. New ways to track people that might, in previous decades, have been widely acknowledged as eerily Orwellian now have the justification of protecting public health.

Israel, for example, is offering travellers arriving from abroad the chance to use an electronic bracelet and a wall-mounted tracker. The system is alerted when individuals take off the bracelet or goes too far from the tracker.

New ways to track people that might, in previous decades, have been widely acknowledged as eerily Orwellian now have the justification of protecting public health

Security risks and data insecurity are endemic with tracking apps. In Europe, the French contract tracing app was last year deemed not fully compliant with GDPR, while in other countries such as India, using a tracking app became de facto mandatory for many.

One year on

It’s a truism that a crisis is often needed for big societal shifts to happen, but in the case of the pandemic, the cliche rings all too true. Digital technology was quickly inserting itself into evermore areas of our lives even before COVID-19, but the last year has seen the pace of change reach light speed, propelled by panic about the spread of the virus.

Digital technology was quickly inserting itself into evermore areas of our lives even before COVID-19, but the last year has seen the pace of change reach light speed, propelled by panic

This normalisation of intrusive or discriminatory technologies should not go unchecked. Even with the pandemic stretching on longer than we might have initially hoped, we must continue to scrutinise all new measures, and ensure they don’t stick around once they’re no longer needed.