A season of Digital Rights for All: the case for community-centred strategic litigation

Digital Rights for All was built on the understanding that digital rights are social and racial justice issues. It is the first initiative that came out of the work initiated by Nani Jansen Reventlow around decolonising the digital rights field in Europe.

When we started planning in March 2021, we sent a survey out to more than 200 Racial, Social and Economic justice organisations active in at least one of the member states of the Council of Europe. We aimed for a wide range of organisations, from grassroots organisations with little to no funding to bigger Europe-wide organisations with mostly paid staff.

After more than a year of the initiative running and eight workshops (Talking Digital, Digital policing, Digital policing of migration, Digital oppression at work, Digital safety, Digital rights to health, Movement lawyering and community-centred strategic litigation and advocacy), including two in person events, many learnings have been acquired. The format of the workshops has changed to further adapt to the needs expressed by the participants to connect with one another and exchange experiences and practices. The online meetings moved from fixed dates decided by DFF in advance to which a wide list of organisations were invited to proposing different dates options to a smaller number of organisations which were specifically working on the topic and offering them the possibility to invite another organisation from their network. The place of the presentation parts of the workshops was shortened from 50 minutes, divided into two blocks of 25 minutes, to one or two 15 minutes long presentations preceded and followed by spaces for participants to exchange with each other to map challenges and power-building strategies.

Finally, we not only reduced the number of workshops foreseen but also their duration to account both for the limitations of online meetings and the specific time and resource constraints weighing on grassroots racial, social and economic justice organisations.

All these changes are linked to one central learning: human rights work is community work and community work necessarily means community-centred work.

It seemed therefore fitting that we concluded this first round of Digital Rights for All workshops with a two-day in-person retreat on community-centred strategic litigation and advocacy.

This blog post aims to support the case for community-centred strategic litigation and share some elements of what can be understood by this term.

In a previous blog, presenting DFF’s strategic litigation toolkit, Jonathan McCully shared a useful definition of strategic litigation “By definition, ‘strategic litigation’ refers to cases that have broad impact outside the courtroom and beyond the individual(s) involved in taking the case. This includes using the law and justice system to advocate for societal changes that better protect and promote human rights and justice.”

Strategic litigation is deemed strategic in relation to its impact either because the jurisdictional decision has a general effect and changes a legal norm, because it helps push a cause forward by creating a public discussion, or because it reveals structural failure of the justice system to prevent harm etc.

Classically however, strategic litigation efforts have too often led to somehow detaching the impact rationale from the impacted, often deciding that the ends justified the means.

This approach fails to understand how relationship to the law, trauma and goals differ depending on positioning.

The two-day in-person retreat offered the opportunity to hold sessions on community-based advocacy (led by Sarah Chander from EDRi) on coalition-building (led by Zara Manoehoetoe from Northern Police Monitoring Project) a session on community-centred strategic litigation (led by Laurence Meyer) and on community-driven litigation (led by Systemic Justice).

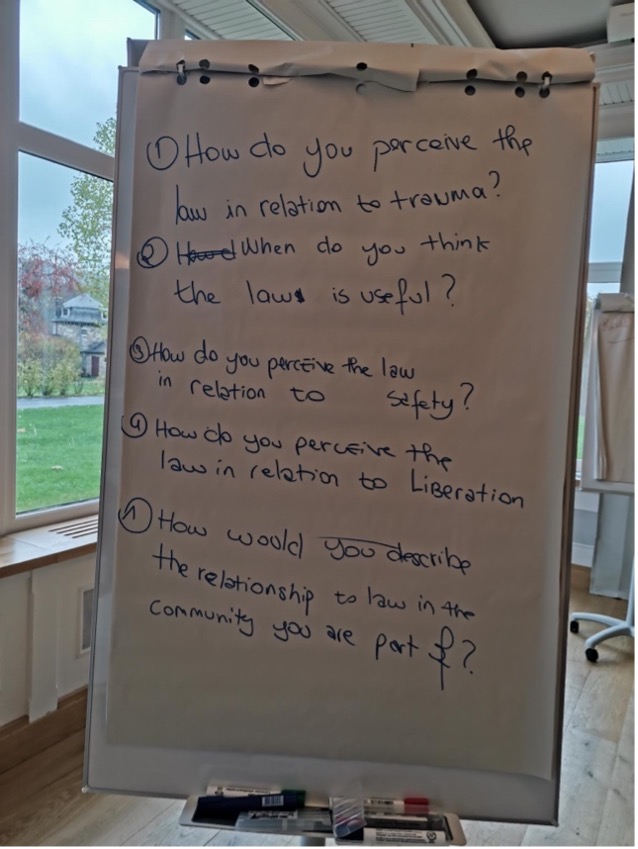

During the session on community-centred strategic litigation, the participants were first prompted to discuss in smaller groups how the law was perceived in the community/communities they are part of, how it relates to trauma, liberation or safety. These first exchanges underlined not only the deep distrust many communities facing marginalisation have with the law but also how it is often linked to traumatic experiences inflicted by the judicial apparatus.

Community-centred forms of strategic litigation attempt to reconcile the process with the goal, making the case that who is involved in designing the strategy deeply determines both the goals and the means.

In this sense, community-centred strategic litigation (CCSL) is a form of strategic litigation led by the impacted communities in which the goals and the means of the strategic litigation are decided collectively, and which centres the impacted communities in all steps of the process.

Because it centres a community, community-centred strategic litigation aims at empowering its members and the organisations which defends its interests, it aims at building power within the community to enhance the capacity not only to react to and protect from current harms by the means they deem appropriate but also to imagine how to prevent those harms from reoccurring.

As is the case for strategic litigation in a more general sense, CCSL aims for collective change and not only for individual impact. Nonetheless, because it is led by the impacted community/communities at stake, the frontier between the individual and collective is not hermetic, those two notions – in the best-case scenario – are in dialogue. It uses the legal instrument as one of many methods of collective mobilisation in a wider strategy to bring about change.

As such CSSL is a tool for power building with transformative goals which decentre legal tools.

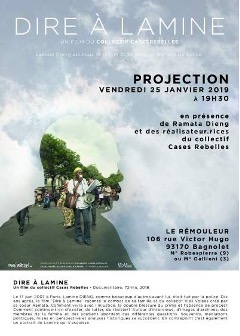

A strong example of community-centred strategic litigation is the work done by the Vies volées (Stolen Lives) collective in France.

On 17 June 2007, Lamine Dieng, a French-Senegalese man of 25, died in police custody after being held on the floor for thirty minutes by four policemen, the equivalent of 300 kg, in the twentieth arrondissement of Paris.

The French police concluded that the death was the result of a cardiac arrest unrelated to the police intervention and took many days to contact the family.

On 22 June 2007, the Dieng family filed a complaint against person unknown for the murder of Lamine Dieng. Since then, two of Lamine Dieng’s sisters, Ramata and Fatou Dieng have led the struggle to get the policemen involved in the death of Lamine Dieng convicted. and until French settled in front of the ECHR in 2020.

A tool for power-building

In parallel to the litigation work, the Dieng sisters recognised the systemic dimension of the harm they were facing and created the Stolen Lives Collective in 2010 with other families who have been the victims of police violence to regroup, support each other, and coordinate.

Although the scope of the ECHR agreement of 2022 only concerns the Dieng family, the case and the litigation resonated on a much larger scale, central to building power within impacted communities to resist police violence in France, offering support to other families. Since 2011, the Stolen Lives Collective has organised an annual national march against police brutality in association with the different families. The march always stops at the spot in the twentieth arrondissement where Lamine Dieng lost his life. This has inspired other families to also organise annual marches in their hometowns, such is the case for the family of Adama Traoré who organise an annual march in Beaumont-sur-Oise.

Transformative goals

In addition to leading several litigations, The Stolen Lives Collective also work on how to prevent these harms from happening in the future. They have for example launched a petition to demand the complete ban of all immobilising techniques such as the chokehold technique used by police forces, a complete ban of all weapons the collective defines as war weapons – including rubber ball grenades, and a national regulation obliging the policy to inform and involve the families when a death in custody occurs (which means also only doing autopsy once the family is informed of and has authorised it).

These demands were voiced and decided upon by the collective composed of impacted families very early on, as the litigation proceedings led to more and more disappointing outcomes. Namely, in 2014, the first criminal court dismissed the case, the dismissal was then confirmed by the appeals court and lastly in front of the highest criminal court in France which ordered the Dieng Family to pay 2000 euros to the accused policemen.

All those goals are aligned with a broader transformative goal of real safety for racialised, poor, disabled and migrant communities. This translated in the work of the collective by demanding measures ensuring more control of law enforcement and a reduction of the weapons available to them.

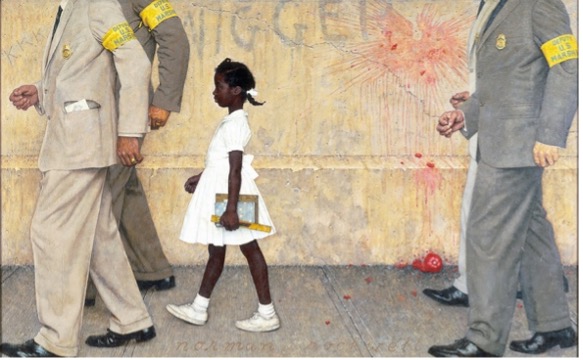

A counter example is the Brown v. Board of Education of Topeka case of 1954.

The landmark case is notably known for reversing the “separate but equal” doctrine stated in Plessy v. Ferguson and for ruling segregation in school unconstitutional. It is one of the most famous supreme court cases and is often used to illustrate the power of strategic litigation.

Yet, in his article “Brown v. Board of Education and the Interest-Convergence Dilemma” the scholar Derrick Bell explains that “(t)he educational benefits that have resulted from the mandatory assignment of black and white children to the same schools are also debatable.

“…Some black educators, however, see major educational benefits in schools where black children, parents, and teachers can utilize the real cultural strengths of the black community to overcome the many barriers to educational achievement.”

Indeed, many of the original asks by grassroot organisers were less around schools being desegregated than ensuring that Black pupils had access to the same resources in school as white pupils. It was in that sense not only concerning the segregationist states ruled by Jim Crow laws but also northern states in which Black children were also going to school in wretched conditions.

In her autobiography, Angela Davis also reminisces on her years in primary school in the South of the United States. After lengthily describing the insalubrious conditions and lack of appropriate material in her school, she writes:

“Without a doubt, the children who attended the de jure segregated schools of the South had an advantage over those who attended the de facto segregated schools of the North. During my summer trips to New York, I found that many of the Black children there had never heard of Frederick Douglass or Harriet Tubman. At Carrie A. Tuggle Elementary School, Black identity was thrust upon us by the circumstances of oppression. We had been pushed into a totally Black universe; we were compelled to look to ourselves for spiritual nourishment.” She then rightly adds “Yet, while there were those clearly supportive aspects of the Black Southern school, it should not be idealized”.

The school system in the United States is still segregated de facto, in many cases more so than it was in 1954 when the Supreme court decided on the case. The central problem of access to resources for Black pupils hasn’t been resolved. To enforce the decision, nonetheless, young Black children such as Ruby Bridges, were put in dangerous situations which necessitated them to be accompanied in class by U.S. marshalls. Many others, in subsequent attempts to integrate schools were sent to schools far away from home which meant longer bus rides in the morning. Finally, it also led to Black students having fewer Black teachers as role models.

The transformative goals publicly set in Brown v. Board of education were sadly not attained. Although the litigation was led by an organisation who wished to represent the impacted communities (The NAACP) it was not led enough by the communities and didn’t centre the communities’ main demands in the strategy: having good school infrastructures for Black children as well.

A decentring of legal tools

While the Stolen Lives Collective fought in court, they also mobilised other tools – some in response to the failure of the judicial system to recognise and repair the harm caused and its success in protecting the institution of the police at all costs – all to follow the needs and goals set by the families, the collective and the broadening support. The litigation was only one of many tools of community mobilisation in their fight for justice.

In addition to organising the march mentioned earlier, they also supervise a count of deaths in police custody. This count led to the French police publishing those numbers in recent years. They organise judiciary peer support and mental support for the families and coordinate actions. They engage in advocacy work and organise film projections and debates.

The legal battle which started in 2007 and finished in front of the ECHR in 2020, with France paying the Dieng Family 145,000 euros, was a tool among others to mobilise and organise against police violence in France. It was the start of the mobilisation but not its conclusion, the collective is still active and the Dieng family are still central in its work and support other families.

Another example of decentring legal tools as the be all and end all of strategic litigation is shared by Betty Hung in her article “Movement lawyering as rebellious lawyering: advocating with humility, love and courage”. The DACA system was put in place under the Obama administration and permitted young adults whose entry onto U.S. soil was illegalised by immigration law to access to year of eligibility of employment instead of being directly subject to deportation. Recalling the campaign put in place in 2012, Betty Hung writes: “the DACA campaign was rightfully led by directly impacted immigrant youth who had conceived of the campaign, strategized and planned it, and were with great courage pushing the White House to take action. Legal strategies and tactics played a supporting role in the campaign and were deployed at the right time and in an effective manner.”

Within the framework of CCSL, successes can’t be based on the belief in legal systems as a protective agent because of the reasons mentioned earlier around how the law is more often than not used as a tool of oppression.

Ife Thompson, barrister and member of black legal protest UK and BLAM UK, explains for example that one idea around movement lawyering is also to “question prosecutorial practices, and the use of pre-trial detention, fines and fees, working to create for democratic, community-based power and accountability, through, for example, bail funds, court-watching and broadly indicting the court as an unjust institution”.

This means that while the litigation is seeking to provide intermediary remedies to harm, the work of the coalition around the litigation can also imply challenging the legal order as an oppressive one – by making clear how the power dynamics playing in court reinforce injustices instead of providing remedies.

For example, the ADCU who won landmark cases against Uber, has often reiterated the limits of the enforcement of those decisions, with new laws being passed to counterfeit their impacts or the burden being put on drivers in precarious situations to engage in tiring procedures to access the payment claims they are entitled to. In doing so, they also work to make the limits of the current legal system visible while still engaging in litigation.

A radical critique of the current shortcomings of the judicial system also opens the door to imagining alternative pathways to justice, which can be seen as another goal of CCSL. It means using strategic litigation to create collective space for imagine better forms of justice.

Strategic litigation as a space for radical imagining

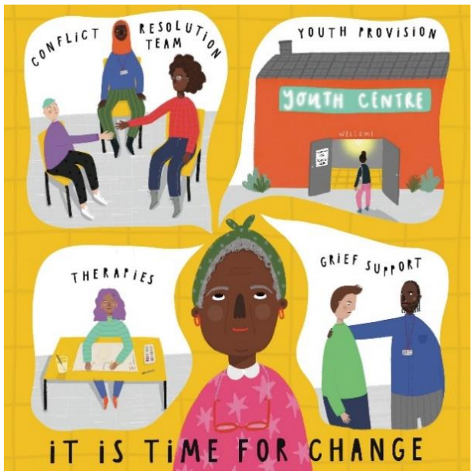

In 2022, ten young Black men were convicted, four for conspiracy to murder and six to conspiracy to cause grievous bodily harm. In their statement prior to the trial verdict the organisation Kids of Colour stated:

“There has been no murder. There has been harm committed by a small minority, which has been admitted to. There is no victim at the centre of this case. While we do not seek to minimise the harm caused, as defence teams have argued, there was no intention or agreement to murder, and that has been denied by all. Two have pleaded guilty to the GBH count.“

All of the ten young Black men convicted had lost a friend who was murdered. The four convicted for conspiracy to murder were convicted based on being part of a Telegram group, created following the death of their friend. As stated in a guardian article “none of the four had any weapons, nor took part in any violent acts or “scoping missions” to locate individuals to be targeted for violence.” They were condemned to 8 years in jail.

Kids of Colour followed the process and put in place actions to challenge the idea that this trial, using the problematic legal ground of joint enterprise, could bring any justice to the harm that was committed while also highlighting how the joint enterprise legal ground was used to criminalise young Black men just because of the music they listened to, the friends they had and their reactions to losing a friend on a Telegram chat few hours after learning about it.

In addition to organising demonstration and sharing what was happening in the court on social media- hereby challenging the narrative put in place to portray the group as members of a gang – they organised a community support campaign, asking community members what they would offer to these young men if the sentence was suspended to ensure accountability and mentoring, among other things.

“In June 2022 we asked you to offer your skills, expertise and care to 10 boys facing prison sentences, to show that as a city, we wanted suspended sentences, and healing-centered approaches to youth violence. Over 500 of you contributed, and your commitments were incredible.”

They received 517 responses, from individuals and organisations, ranging from attending monthly accountability meetings, regular phone calls, access to networks to ensure employment, to loss and grief support, childcare support, and access to non-educational activities such as music or sport.

This community support showed concretely how different, multifaceted answers to harm could be put in place, outside of the prison system. The report was shared during the trial by the defendant lawyer to strengthen the legal argument for suspended sentences. It made abundantly clear how the judicial system wasn’t fit to repair harm and provide healing, while also making other pathways to justice tangible in a collective imagining effort.

Community-centred strategic litigation can take many forms: community lawyering, movement lawyering, community-driven litigation – depending on the needs. Those forms of lawyering understand the legal system as a site of oppression which reproduces problematic dynamics.

It leads to recognising that the methods, the knowledge, as well as the analyses coming from racial, social and economic justice movements are the one relevant to create real change towards justice, also when it comes to tech-related harm. Audre Lorde taught us that only other tools can create new houses. Community-centred strategic litigation can be one of these tools, among many others, to create desirable presents and futures for all.