Reflexiones sobre las plataformas de trabajo y su actuar político, a propósito del reciente acuerdo para una Directiva Europea sobre su regulación

Como sabemos, entre las distintas y aún persistentes consecuencias generadas a partir de la Gran Recesión de 2008, una de las fundamentales ha sido el marcado optimismo tecnológico que se desató, posibilitado por la comunión entre el capital riesgo, deseoso de invertir en nuevos sectores que encandilaran al mundo, y el vertiginoso y caótico mundo de las start-ups, que opera bajo el no menor objetivo de lograr revolucionar distintos mercados para transformar el modo en que se hacen ciertas cosas, prometiendo tasas de retorno colosales.

En toda esta aventura especulativa, uno de los focos preferidos ha sido el de las plataformas de trabajo, donde la misión disruptiva depende ni más ni menos que de lograr legitimar un nuevo modelo laboral: el colaborador, una figura que estaría a medio camino entre el trabajador autónomo y el asalariado. En su versión ideal, se trataría de un tipo de trabajador por cuenta propia que gozaría de ciertas coberturas y protecciones básicas: tarifa fija por horas, seguro de accidentes, vacaciones, bajas por enfermedad, plus de nocturnidad y derecho a formación. El objetivo sería brindar a los ciudadanos la posibilidad de generar nuevas formas de ingresos a través de trabajos flexibles, aunque a costa de renunciar a los derechos de sindicalización, negociación, salario fijo, coberturas garantizadas y descanso de los asalariados, además del deber de pagar sus propias cotizaciones.

Como cabe esperar, en este ambicioso recorrido, las plataformas han ido encontrando distintos tipos de problemas y resistencias según cada territorio. Hay países, como Francia, donde a pesar de haber abierto un largo conflicto judicial y contar con dos sentencias del Tribunal Supremo en contra, su auge ha intentado ser capitalizado como una apuesta al futuro por parte de los gobiernos liberales. Por el contrario, en aquellos donde las trabas han sido más duras y las instituciones correspondientes se han opuesto a su proliferación desregulada, se ha dado paso a una ardua batalla política, judicial y mediática, que ha derivado en situaciones de acoso laboral, persecución sindical y distintas vulneraciones a derechos fundamentales.

Todo esto significa que más allá del desarrollo de tecnologías revolucionarias para generar nuevas fuentes de ingreso, el punto crítico que marca el éxito o fracaso de este tipo de empresas en realidad depende del trabajo político que sean capaces de desarrollar, pues, al tratarse de un sector que propone una forma de organización que transgrede derechos laborales y acuerdos democráticos, más temprano que tarde chocan de lleno contra las diferentes regulaciones. Se trata, entonces, de un momento prefijado en la ruta de la plataforma, sería difícil imaginarlo de otra manera. Y, por tanto, también sería sencillamente ingenuo pensar que el capital riesgo se dejará seducir exclusivamente por empresas viables y que respeten los derechos consagrados, pues está comprobado que tiene debilidad por aquellas dispuestas a promover la desregulación y subvertir el estado de las cosas para llevarlas a su favor.

Es por ello que, a través de estos años, hemos podido presenciar cómo las plataformas de trabajo han desplegado todo tipo de estrategias para conseguir sus objetivos, afectando directamente en la vida de miles de trabajadores y poniendo en cuestión los fundamentos que respaldan los marcos normativos. Es aquí donde está lo delicado. Lamentablemente, la experiencia nos invita a pensar que aquellas más agresivas y radicalizadas son las que tendrán mayores probabilidades de conseguir la financiación que les permita expandirse por el mundo. Está quedando demostrado que la temeridad e irresponsabilidad son algunos de los rasgos más valorados al momento de asegurar el retorno a los inversionistas.

En este sentido, la escasa voluntad de sanción que ha existido alrededor del mundo sobre los emprendedores de plataformas permite comprender uno de los puntos de fondo del problema. Pareciera que el objetivo de la digitalización y la adaptación de la estructura productiva de cada país de cara al futuro próximo, que viene de la mano precisamente con este tipo de compañías, ha transformado a los altos mandos de las tecnológicas y a sus financistas en intocables. La vulneración de los derechos de los trabajadores en este sector se ha transformado en un acto cotidiano e impune y da la sensación de que existe cierto margen de error en los nuevos modos de trabajo, hasta que en algún momento las compañías den con la tecla correcta. Mientras tanto, asistimos a la creación de burbujas financieras y experimentos laborales que practican una política del “todo vale”.

Pensemos, por ejemplo, en el caso de WeWork. Se trata de una start-up dedicada a la gestión de espacios para coworking que llegó a estar valorada en la escandalosa cifra de 47 mil millones de dólares -47 billones- y que en unas pocas semanas se desplomó, dejando a miles de empleados en la calle. Hablamos de una empresa del montón, dedicada a un asunto tradicional y corriente, pero con un toque digital, lo que le alcanzó para seducir a inversionistas y crear su propia burbuja. El caso es que, tras el colapso, su CEO, gracias al simbólico acto de vender sus acciones particulares a tiempo –“Soldado que arranca sirve para otra guerra” dirían en mi país– salió de la empresa con la no menor tajada de casi mil millones de dólares para su propio bolsillo. Algo que sólo puede ser calificado como un disparate. Hablamos de un joven que con 10 años de trabajo dedicados a vender e inflar una compañía que nunca fue rentable, finalmente logró hacerse multimillonario. Y no ha pisado la cárcel un solo segundo. Negocio redondo.

De esta manera, podríamos decir que las diferentes administraciones han ido quedando presa de las dudas, viendo con sus propios ojos cómo día a día las prácticas más ordinarias ponen en cuestión los límites establecidos, y apenas han demostrado capacidad de respuesta ante la espectacular fuerza de acción de las multimillonarias empresas y sus tecnologías, que en pocos meses crean actividad para miles de trabajadores de los más diversos tipos, generando con ello toda una crítica a la burocracia del Estado y sus regulaciones. Y bien sabemos que no hay mejor herramienta de presión que un mercado creciente, por irregular que pueda ser su gestión.

En consecuencia, aunque estamos frente a un modelo que no es sostenible en el tiempo y que pone en riesgo las bases de las democracias, es imposible negar que ha logrado imponerse poco a poco. Dicho lo anterior, la pregunta es obvia, ¿hasta dónde llega realmente la soberanía de los países frente al autoproclamado futuro de la economía?

A pesar de todo este escenario, generalmente cuando se piensa en las plataformas de trabajo y su corto pero intenso recorrido disruptivo contra los derechos laborales, se tiende a caer en una reflexión que pone el foco casi exclusivamente en los principales aspectos de los conflictos judiciales que han desatado o en los escasos procesos legislativos que han protagonizado. Esto a todas luces es un problema. La discusión se ha centrado sobre todo en tecnicismos jurídicos y recovecos normativos sobre el encuadre que deben tener en los diferentes marcos legislativos, pero ha costado abrir el camino para hablar en profundidad de su figura del colaborador, del papel que está jugando el desarrollo de algoritmos laborales, del trasfondo discursivo o ideológico que las sostiene, de sus actuales y futuras consecuencias o del proyecto de sociedad que representan.

Durante todo este tiempo, la economía de plataformas no sólo ha ido transformando los modos de trabajo, sino que fundamentalmente también está logrando poner en discusión los márgenes que diferencian entre lo que entendemos por un asalariado y un autónomo, entre un trabajador y un emprendedor. Hablamos de un movimiento empresarial definido, que ha desarrollado las tecnologías y los discursos necesarios para botar las barreras estructurales y ampliar las fronteras del emprendimiento, hasta llevarlo a la palma de la mano. Efectivamente, hoy en día existe la posibilidad real de transformarse en un pequeño empresario multitarea sin grandes costes de por medio.

En la gran mayoría de los casos, sin embargo, se trata de emprendimientos precarios, puesto que todo depende de la capacidad de autogestión que tenga cada trabajador en su esfuerzo por rentabilizar recursos ordinarios: una motocicleta para repartir, una habitación para ser anfitrión de huéspedes en su propia casa, un coche para ser chófer o la sola motivación para limpiar, cuidar o reparar. Se trata de que nuestro contexto inmediato pueda ser pensado desde una lógica productiva que nos incentive a emprender, a asumir riesgos.

Así, desde que comenzaron a operar, hemos visto a todo tipo de personas, pero principalmente migrantes, parados, personas sin papeles, mujeres dueñas de hogar y jóvenes, no organizándose para transformarse en actores políticos legítimos que intentan mejorar sus condiciones, sino lanzándose hacia el mercado como agentes en perfectas condiciones, que pueden entrar a competir asumiendo los costes y consecuencias de su actividad. En otras palabras, esto significa que aquello que debería ser un problema político que afecta a la sociedad en su conjunto, es mercantilizado y reducido a un problema individual. Todo lo cual no es más que la expresión de un intento por resignificar la precariedad, despolitizarla y conducirla hacia al mercado.

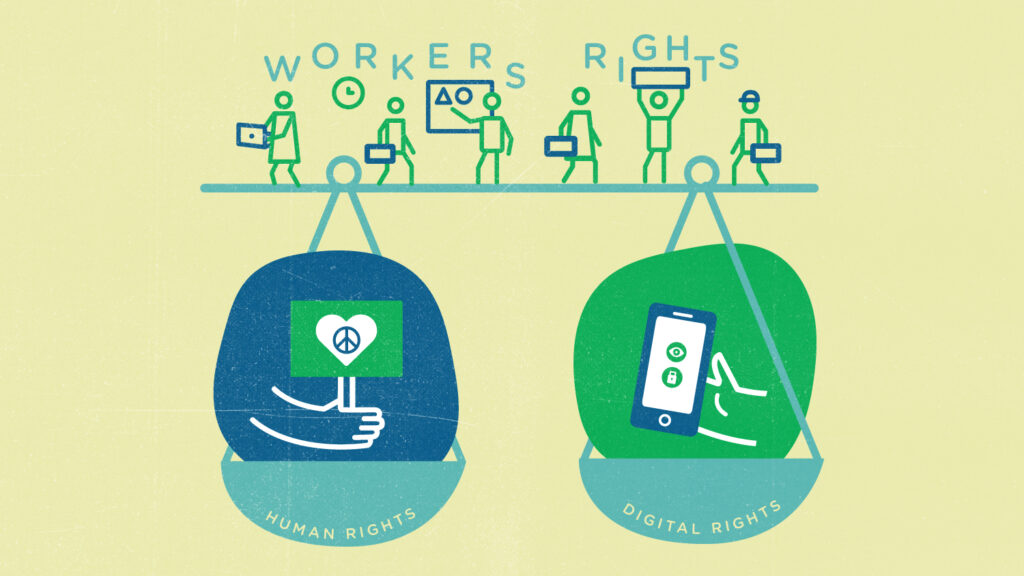

De todos modos, también debemos recalcar que sí se han generado resistencias frente al seductor avance de estas ideas. En distintas partes del mundo, los trabajadores se han organizado en movimientos de base, dando forma y fuerza a toda una corriente ciudadana que reivindica un aspecto básico para cualquier democracia: la regulación y protección, haciendo hincapié en la recolección de datos, la transparencia algorítmica, el derecho a la sindicalización, a la desconexión, la privacidad, el límite en las jornadas, la defensa del contrato laboral y, sobre todo, contra el traspaso de costes y riesgos de la actividad a los trabajadores.

Aunque estos movimientos han sido el impulso para algunas iniciativas de ley, lo cierto es que las empresas han sabido desplegar todo su poderío desregulatorio a través de lobbies y campañas de presión pública para intentar frenarlos. El mejor ejemplo es lo que sucedió con la Ley AB5 de California, que fue tumbada mediante la campaña política más cara de la historia de aquel Estado, protagonizada por Uber, Lyft, DoorDash y Postmates, en la cual se presenciaron todo tipo de presiones contra los trabajadores y la ciudadanía.

De cualquier manera, y a pesar de todo esto, hoy contamos con pocas pero importantes victorias que deberían marcar el camino. Entre ellas está la reciente aprobación del proyecto de Directiva Europea sobre Plataformas de trabajo para regular el sector, que incluye presunción de laboralidad para todos los trabajadores de plataformas de servicios y transparencia algorítmica, o la “Ley rider” de España, basada también en una presunción de laboralidad para los repartidores y acceso de sus representantes a los parámetros que consideran los algoritmos para organizar el trabajo.

En síntesis, queda claro que el sector de las plataformas de trabajo ha sabido cubrirse bajo ese velo disruptivo y prometedor que le otorgan sus avances tecnológicos, lo que hace que a veces cueste dimensionar sus alcances. Estamos ante todo un movimiento con una propuesta definida, que sobrepasa largamente las pretensiones de un mero conjunto de emprendimientos soñadores. Por eso mismo, esto no se trata de un asunto coyuntural, es un proceso histórico.

Sería un error guiarse por hechos aislados y no intentar ver toda la imagen en perspectiva. Nos equivocaríamos si pensamos que por determinadas sentencias o leyes específicas este movimiento llegará a su fin, como si se tratara de cierta moda digital que desaparecerá sin dejar rastro o como si en otras partes del mundo no hubiesen logrado imponer su visión. En estos pocos años, ha quedado claro que la economía de plataformas ha instalado una campaña en toda regla para modificar la regulación del trabajo y transformar sus principios.

De nosotros depende defender los derechos que hemos conquistado.

Felipe Diez es sociólogo y doctorando en Sociología y Antropología por la Universidad Complutense de Madrid, miembro del Observatorio TAS y portavoz de Riders x Derechos. También es antiguo rider de Deliveroo y formó parte del grupo de discusión de la Ley de Riders española con el sindicato Unión General de Trabajadores (UGT).