Big Tech Platforms: With great power and responsibility should also come greater accountability (part two)

This blog post is the second instalment in a two-part series on platform accountability. In part one, we shared some reflections and recent developments around the topic, as well as an invitation to join the Strategic Litigation Retreat we recently co-hosted last month with Homo Digitalis in Greece. This instalment recounts some of what transpired at our Legal Avenues for Platform Accountability workshop, held earlier on in the year.

Back in April, we held a workshop in Barcelona aimed at building capacity and sharing knowledge amongst the digital rights community on how to use strategic litigation and a variety of legal frameworks to hold Big Tech platforms accountable for the online and offline harms they bring upon on society as a whole, especially on marginalised groups and communities.

During the sessions, we focused on both newly enacted and upcoming legal instruments , and also on well-established but under-utilised ones. What follows is a brief description and the key take-aways from each of those sessions.

The Digital Markets Act (DMA) and the Digital Services Act (DSA)

We began by discussing the DMA and its potential for curbing the market power and monopolistic practices of Big Tech platforms, as well as the obligations to make them more transparent and accountable under the DSA. Participants reflected on how the DMA could limit platforms’ dominant positions and weaken their business model, and discussed the opportunities that exist for civil society to litigate allied with platform competitors through its enforcement mechanisms, or with consumers through collective redress mechanisms. The DSA session was focused on practical exercises exploring three potential litigation options leveraging the obligations the act imposes on platforms: adversarial auditing of recommender systems, challenging risk assessment results through public interest complaints, and lastly, shining a light on and contesting “shadow bans”.

The Artificial Intelligence Act (AI Act)

Although the AI Act draft proposal itself is more centred on public sector use of AI systems, and its risk-based approach does not create enforceable rights or concrete redress mechanisms, we did identify some potential entry points for platform accountability litigation. For example, the transparency obligations for AI systems used in employment and workers management could play an important part in cases related to platform workers’ rights.

With AI hype at an all-time high, especially when it comes to Generative AI tools such as Chat GPT, Midjourney, and the like, the Parliament’s proposed text has sought to catch up with emerging societal concerns by establishing specific obligations on these type of systems, additional obligations on foundational models (which Generative AI is a subset of), as well as those which would be ascribed to the particular AI system according to the risk category it falls under. On the flipside, Big Tech and industry lobbyists are pushing for a high-risk self-classification loophole that civil society organisations have warned would undermine the entire legislation. For now, all eyes are on the trilogues, which will determine these and other contentious issues around the AI act

The Corporate Sustainability Due Diligence Directive

Although participants thought that it was likely that the tech sector would eventually be included in the scope of this Directive under the high-risk category, they were not so optimistic as to how effective the Directive itself would be as an enforcement mechanism, mainly due to the potential fragmentation of the regulatory landscape among member states when implementing it. Nevertheless, there was agreement that it represents a step forward from soft law instruments such as the UN Guiding Principles on Business and Human Rights.

The Regulation on the Transparency and Targeting of Political Advertising and the European Media Freedom Act (EMFA)

One common thread throughout the discussion was the discrepancies between both proposals and the DSA, namely the standards for consent for the processing of personal data for targeted political advertising and content removal, as well as the media exemption carved out in the EMFA. We discussed ways in which these contradictions can be addressed via advocacy and policy work, and what changes to push for in order to improve, optimise and harmonise these regulations for potential litigation once they are applicable.

The Platform Workers Directive

This Directive introduces a fact-based doctrine and presumption of employment irrespective of what is stipulated in workers’ contracts, establishing a solid legal basis for litigation to ensure that platforms observe labour law and guarantee workers’ rights. During the session, we discussed misclassification as misinformation, algorithmic management and surveillance, dynamic pricing, multi-app and standby-time work. We also explored the gaps and areas of overlap between the Directive and other legal instruments such as the GDPR and the AI Act.

The Representative Actions Directive (RAD)

During the workshop, participants expressed interest in diving deeper into the collective redress opportunities afforded by the RAD –which has just recently become applicable since 25 June this year– so we held a peer-facilitated session which offered a general but comprehensive overview of some of its most important aspects. Among them, the current state of implementation among Member States (or lack thereof), key definitions, qualified entities, cross-border cases, injunction and damages, funding, and cost allocation.

Throughout the remaining of this year and throughout next year, collective redress will be one of our main focus areas at DFF. Precisely this week, we will be kicking off our newest speaker series titled “Collective Redress – Lessons from Around the Globe”. The webinars will be held every Wednesday from now until the end of November, so if this topic interests you, do tune in, and share amongst your networks as well.

The EU Charter and Anti-discrimination Law

Lastly, the workshop also included sessions on EU Charter Rights and anti-discrimination law, both of which tend to be under-utilised when it comes to strategic litigation in the digital rights field. The session on the Charter was a great opportunity for us to share more about our EU-funded digiRISE programme, which aims to create capacity and bolster the Charter’s potential to promote and protect digital rights, namely through workshops and publicly available resources on the connections between Charter rights and digital rights, as well as litigation strategies reliant on the Charter. Check out the recent Digital Rights are Charter Rights speaker series and newly-launched essay series to learn more.

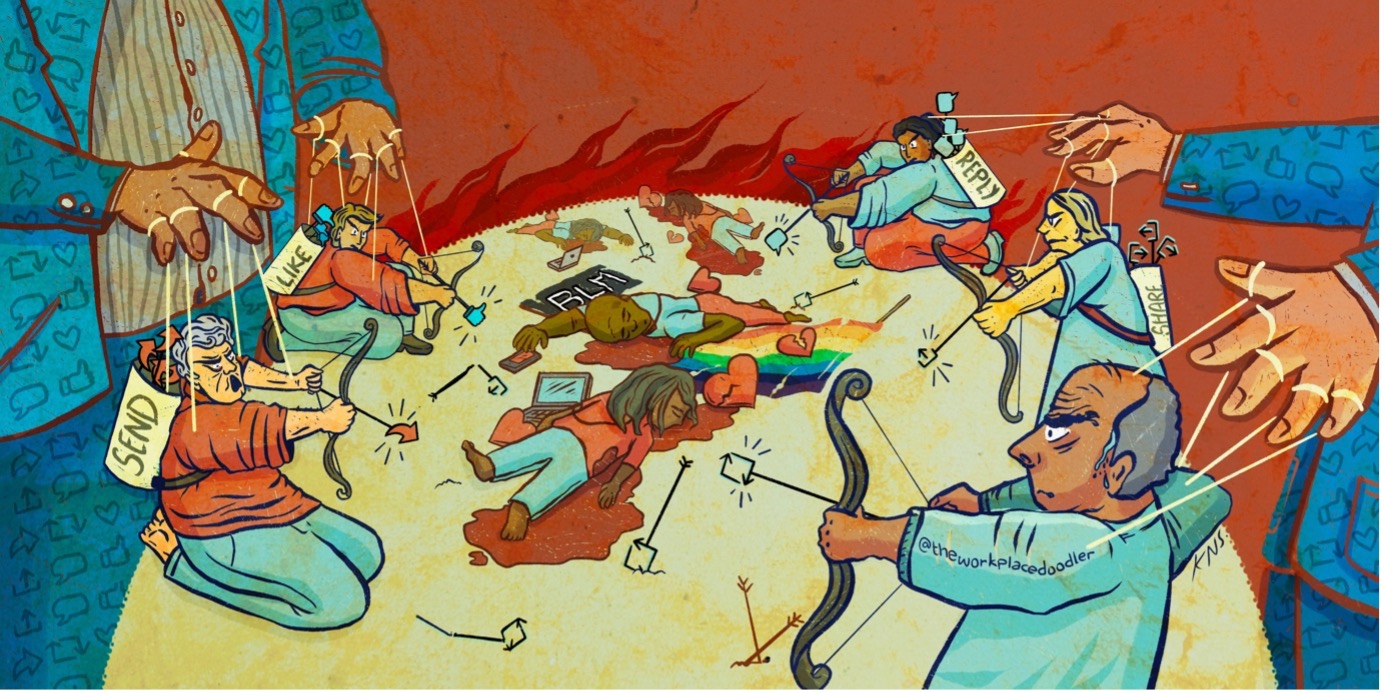

The anti-discrimination law session touched upon topics such as direct and indirect discrimination, intersectional approaches in contrast to compounded or cumulative conceptions of discrimination, standing, burden of proof, and how despite their opacity, platform algorithms can be a significant revealer of discrimination. The discussion also included reflections on broader strategic issues, such as how much we should focus on other means of effecting social change instead of only relying on courts, which can co-opt the work of movements, activists and affected communities by re-framing the issues and limiting the scope of their demands.

We invited participants to think critically about their overall approach to legal practice, highlighting the fact that we are more likely to effect social change through strategic litigation if it is embedded in a wider strategy or movement, and interwoven with parallel efforts which may include advocacy, policy work, communications, research, and technical evidence gathering, among others.

We also collectively brainstormed what working closely with –and ideally led by– those most affected by platform-driven digital harms could and should look like, as well as how to move towards models of litigation which are more community-centred –for example, by engaging in movement and community lawyering– when working on platform accountability issues.

Thanks, onwards and upwards!

We would like to thank all the attendees for the energy and enthusiasm they put into the event, and we are extremely grateful for the valuable insights, knowledge and experience that they shared throughout the workshop. Furthermore, we are looking forward to seeing the potential opportunities for collaboration which surfaced during the workshop materialise into more impactful work and litigation on platform accountability and digital rights issues.

There are already some promising prospects on the horizon. For example, Amnesty Tech are actively working with survivors of tech harms from diverse regions around the world and seeking to bring them together to develop a participatory strategy for challenging Big Tech and demanding justice for affected communities. They are now planning a research and litigation project for 2024-2025 with partners in Eastern Europe, based in part on the connections made and discussions that emerged during the workshop.

Lastly, in the wake of the workshop, and seeking to build on its momentum, one of the participants (along with members of the People vs Big Tech coalition) created a strategic litigation networking channel on Signal. If you are interested in joining, or have any ideas on how to further catalyse any of the platform accountability efforts and opportunities mentioned herein, please do get in touch.

DFF’s Legal Avenues for Platform Accountability Workshop took place thanks to the generous support of Luminate Strategic Initiatives, which you can learn more about here. We would also like to thank the Espai Societat Oberta for their warm hospitality and access to their event space in Barcelona to host it.