Towards a Litigation Strategy on the Digital Welfare State

Towards a Litigation Strategy on the Digital Welfare State

By Jonathan McCully, 23rd April 2020

Following the DFF strategy meeting in February, we held an in-depth consultation on the “digital welfare state.”

During this consultation, representatives from international human rights organisations, welfare charities, academia, and the digital rights field discussed how we might go about defining the “digital welfare state”.

We surveyed what work is already being done on the issue, and what our shared objectives might be for holding governments to account for digital rights violations in the welfare context.

What is the “Digital Welfare State”?

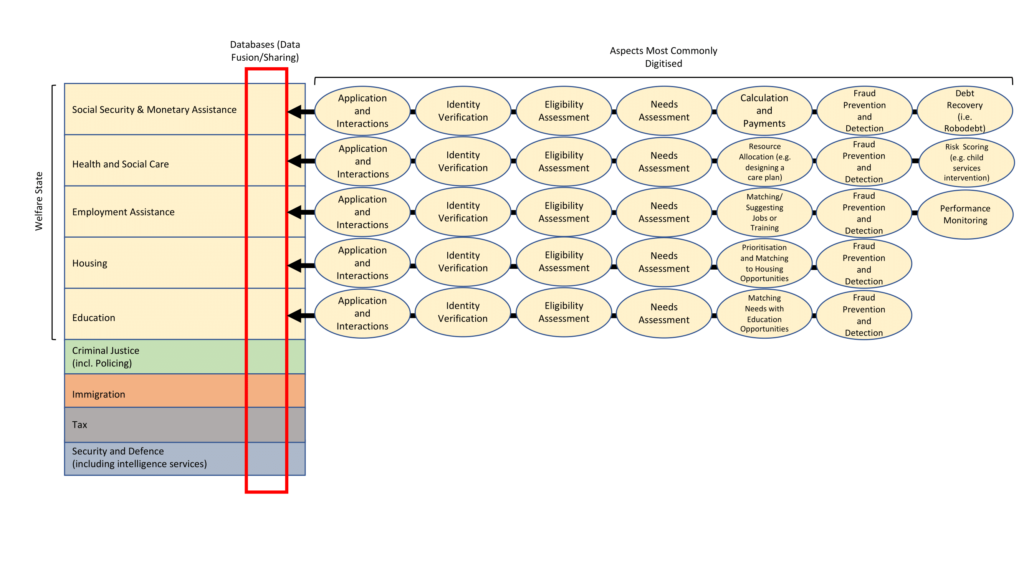

During the consultation, participants were invited to critique a visual representation of the “digital welfare state,” assembled by DFF following conversations with organisations working at the intersection of digital rights and social protection provision.

Many of the participants noted that key aspects of the “digital welfare state” they were working on were reflected in the visualisation. Nonetheless, a number of pertinent observations were made on how to define this emerging concept.

Some participants noted that the term “welfare,” in itself, is context specific and can be a highly politicised term. Other participants noted that the visualisation implied a process of applying for social protection, when some countries proactively or automatically provide individuals with monetary assistance and other services without an individual having to apply for them. These proactive procedures are often fuelled by the processing of citizens’ data that has already been collected by the state in various other contexts.

…the term “welfare,” in itself, is context specific and can be a highly politicised term

There were a number of aspects identified as missing from the visualisation. For instance, the visualisation could be adapted to include the use of digital and automated decision-making tools in the context of handling disputes and appeals of welfare decisions.

In the UK, for example, the Child Poverty Action Group has published a report entitled “Computer Says ‘No’”, which highlights the problems experienced by claimants trying to dispute or challenge a decision on their Universal Credit reward through online portals. Other participants noted that some services facilitating access to justice, such as free legal advice, could also fall within the definition of the “welfare state.”

Participants also highlighted certain issues that were important to keep in mind when looking at the “digital welfare state.” For instance, migrants, asylum seekers, refugees and stateless persons can face particular difficulties in exercising their rights to social protection and may even be targeted with certain digital tools.

Migrants, asylum seekers, refugees and stateless persons can face particular difficulties in exercising their rights to social protection

Also, access to the internet is not universal, and welfare recipients in many jurisdictions are simply unable to access online portals to manage their welfare provision or challenge decisions made against them.

Furthermore, many of the digital tools being deployed are designed, built and sometimes even run by private entities. These private entities can hide behind trade secrets and intellectual property, evading the level of accountability we would expect from welfare authorities.

There was broad agreement that some digital tools may genuinely improve access to social protection. However, we must always scrutinise the heightened surveillance and data security concerns that accompany such tools. Where does the data used to build these digital tools come from? Has data collected for welfare purposes been processed securely and lawfully? Does it comply with the principles of data minimisation and purpose limitation? These are the key questions we should ask ourselves when we come across digital systems in the welfare context.

We must always scrutinise the heightened surveillance and data security concerns that accompany such tools

Towards a Shared Vision of “Digital Welfare”

Participants working on a range of “digital welfare” issues, from those supporting welfare claimants in navigating the digital interfaces put in place by welfare authorities to those who are advocating for data protection and privacy across a range of government services, discussed what their shared vision was when it came to the “digital welfare state.” A number of goals for this work were identified.

There was convergence around the principle that digital tools used in the welfare context should be human rights respecting “by design,” safeguarding individuals against violations to their rights to privacy, data protection, non-discrimination, and dignity.

Such systems and tools should be inclusive by default, meaning that the starting point should always be that it is accessible to everyone. It should not be a requirement that you be digitally literate or have access to the internet in order to access social protection. Instead, there should always be accessible offline alternatives to the digital tools. Digital tools should not shift the burden of proving eligibility or need onto individuals, and welfare recipients should have full control over the information they share with welfare authorities.

Digital tools should not shift the burden of proving eligibility or need onto individuals

There was also broad agreement around digital tools needing to be transparent and open to review, either by way of freedom of information requests or by making the tools open source.

Where Next?

The conversations we held in February feed into our work in building a litigation strategy that can contribute towards ensuring social welfare policies and practices in the era of new technology respect and protect human rights.

In the coming months, we would like to speak to as many individuals and organisations working on this topic as we possibly can to help us further define the parameters of such a litigation strategy. If you are interested in getting involved, we would welcome your views and input. Get in touch with us!